Thanks to recent technological advances in computer processing hardware, machine vision cameras, and open source software tools, fishery researchers at the Alaska Fisheries Science Center are now taking the next steps in developing electronic monitoring systems and image processing applications that would automate data collection from images captured onboard vessels. Eventually, the goal of real-time image processing is to support scientific data that provide greater certainty in managing ocean resources and sustainable fishing practices.

In 2018, the North Pacific Fishery Management Council and NOAA Fisheries implemented an electronic monitoring program to provide a monitoring alternative for longline vessels, where accommodating an observer can be logistically difficult.

“This program’s integration of electronic monitoring data directly into the catch estimation data stream marked a milestone,” explains Farron Wallace, former senior research fisheries biologist at the Alaska Fisheries Science Center and now director of the Southeast Fisheries Science Center Galveston Laboratory. “However, the systems are not yet able to collect detailed data on individual fish length and weight as an observer does—data that are critical to support stock assessment modelling and catch estimation.”

Additionally, although useable observer data in the North Pacific are either uploaded to a database several times daily via satellite or uploaded at the end of a trip, vessels using electronic monitoring systems store imagery on hard drives, which are then mailed after the trip to video reviewers who process and extract key information. This time-consuming procedure can significantly delay data upload, a concern when data timeliness is essential for fisheries management—particularly for those management programs that have prohibited species catch limits, maximum retainable allowances, or other in-season quota restrictions.

To address these challenges, the Alaska Center worked with NOAA's Fisheries Information System program—a state-regional-federal collaboration with the mission of improving access to comprehensive, high-quality, timely fishery-dependent data—to find an effective and cost-efficient monitoring solution. Working in close collaboration with industry on system design, operability, and functionality will help ensure that the systems fit seamlessly into fishing operations and vessel configurations, and that the systems are trusted by industry partners to work effectively.

The new systems use cameras that are built for industrial inspection—or analyzing whether products meet specifications—and can withstand a range of temperatures and mechanical stresses typical in commercial fishing operations. The systems can integrate data from a suite of sensors—including GPS, hydraulic pressure, and drum rotation monitors—to determine set and haul positions and collect effort data. The sensors indicate when a haul back is occurring, which triggers image collection when needed and allows stand-by mode at other times.

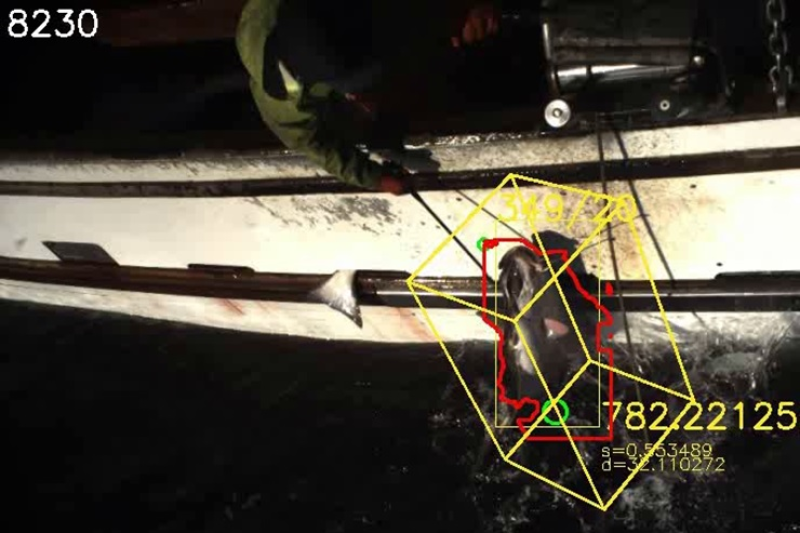

“The ‘stereo-camera’ system uses two cameras that pair images, allowing for highly precise measurements—even of flopping fish being hauled onboard,” says Wallace. “Right now we’re working on incorporating the latest developments in artificial intelligence into the systems, including a machine learning algorithm, so they’ll eventually be able to automatically process length measurements and highly accurate species identification for most common species.”

In 2019, these capabilities are being tested in real time onboard fishing vessels to ensure accuracy and full accounting. Ultimately, the systems should reduce the volume of data needed to gather necessary information, improving data transfer rates and improving data storage and management, both on vessels and on land-based servers. Capturing fish length through the images could also improve data collection on species that are not usually landed, are too big (such as sleeper sharks), or are too difficult for observers to sample (such as giant grenadier, which easily drop off at the rail). Finally, the systems’ spatial image capabilities could be used to map high bycatch areas and improve future management strategies to lower bycatch.